The Robots Are Fighting Each Other Now

Your insurer uses AI to deny your claim. There are now AI tools built specifically to fight back. Welcome to the arms race nobody asked for.

Markus Grant | theranter.com | stories@theranter.com

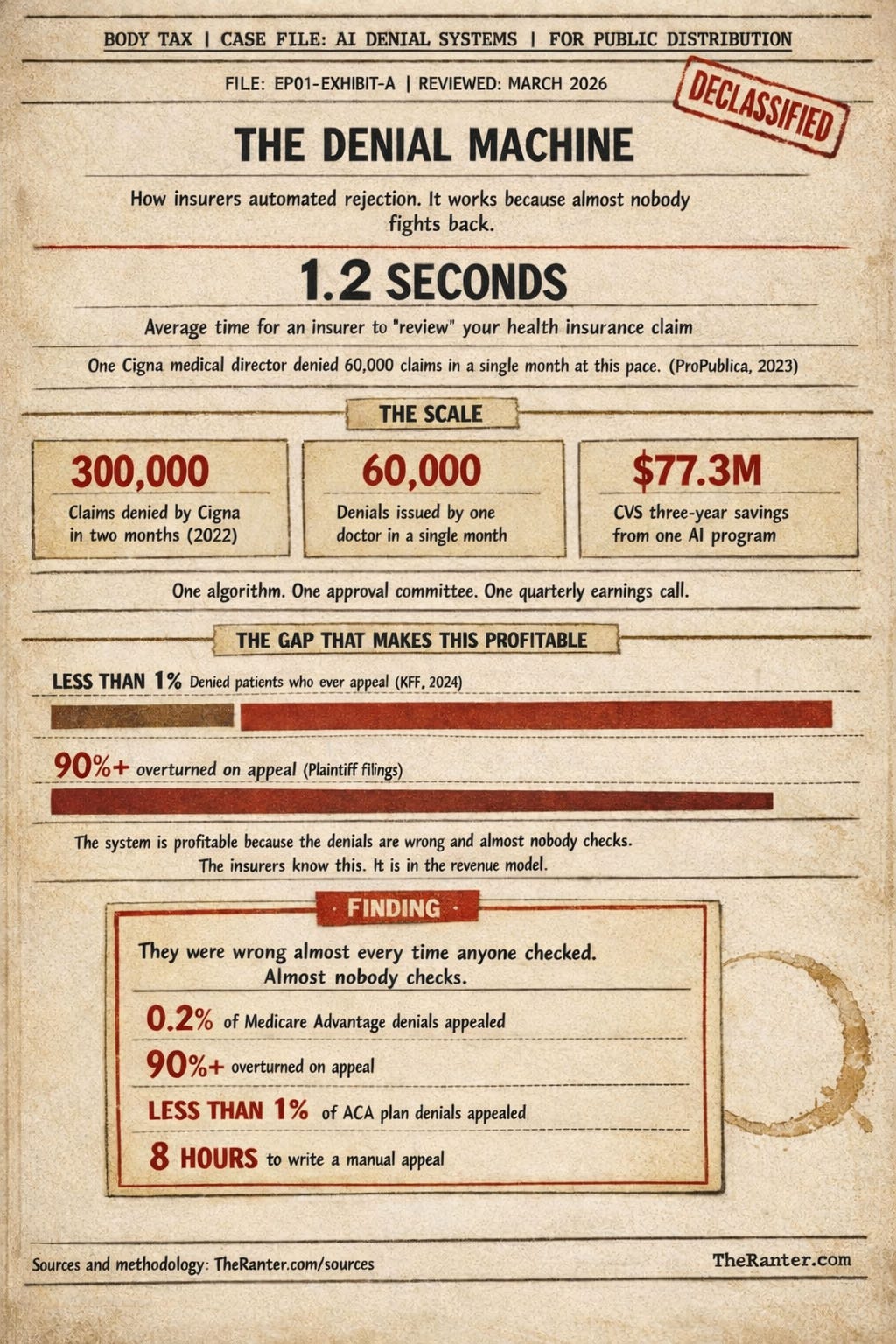

In 2022, a doctor at Cigna denied over 60,000 claims in a single month. ProPublica reported the average review time: 1.2 seconds per case.[1] The doctor allegedly never opened the patient files. He didn’t need to. The algorithm had already decided, and he was just the signature.

That was the denial side. An AI scans your claim and flags it for rejection based on a procedure-to-diagnosis code match. Cigna calls this an “automated rules engine,” which is technically accurate the same way a vending machine is technically a nutritionist. Then a human rubber-stamps the pile. In bulk. Your entire medical history, reviewed by someone who was paid to stamp it, not read it.

Here is the full loop, in plain English. Your doctor orders a procedure. The insurer’s software checks whether your diagnosis code matches a pre-approved list. It doesn’t. The software flags the claim for denial. A medical director signs the rejection, sometimes without opening your file. You receive a letter. If you want to fight it, you have to locate the appeals process, gather your medical records, pull your policy documents, identify the specific clinical language the insurer used to deny you, find supporting medical literature, and submit a written response -- usually by fax, on a deadline that is not always clearly stated. At no point in this process is anyone on the insurer’s side being paid to ask whether your treatment is medically appropriate. Every step is about cost and documentation, and that is not an accident.

Now there’s an appeal side. And it’s also AI.

Welcome to the arms race nobody asked for, where robots are fighting robots over whether you deserve healthcare, and you’re standing in the middle holding a denial letter and a co-pay.

Part I

Holden Karau was a tech worker in San Francisco building search and recommendation tools. Early AI work, the kind that decides what shows up when you type something into a box. Then an SUV ran a left turn across four lanes of traffic and hit her Vespa. She survived. The paperwork almost killed her anyway.

“I’d had a number of denials before, but they were all sort of spaced out. When I got hit by a car, everything just happened all at once. And it was like, oh, this paperwork is insurmountable.”Holden Karau, Managed Healthcare Executive[2]

She’s a computer scientist. She builds tools for a living. So in 2023, she built one: Fight Health Insurance. You upload your denial letter. The system scans it, identifies why you were denied, cross-references your situation against medical literature and common insurance policy language, and generates an appeal letter. Multiple versions, different angles, ready to submit. It’s free for individual patients. The company makes money selling an enterprise version called Fight Paperwork to medical practices and health systems. Because of course doctors need it too. They’re drowning in the same forms. That last part is the receipt: the paperwork problem is so severe that medical professionals with staff and resources are buying software to fight it alongside their patients.

Down in Research Triangle Park, North Carolina, a nonprofit called Counterforce Health built something similar. Their AI assistant analyzes your denial letter, pulls up your specific insurance policy, searches medical research for supporting evidence, and drafts a customized appeal. Also free for individuals. They charge hospitals and health systems for the professional version.[3] Some Mayo Clinic physicians have recommended it to patients in educational presentations on fighting denials, alongside general-purpose AI tools. Doctors at the Mayo Clinic are telling patients to use chatbots to fight insurance companies. That’s where we are.

For coverage questions rather than active denials, there’s Sheer Health, which lets you connect your insurance account directly and ask plain questions about what you’re actually covered for. And for a full appeal from scratch, there’s Claimable, which charges around forty dollars to generate one from your information.[4] A growing number of people are just going straight to the general AI chatbots. A KFF poll found that a quarter of adults under 30 have used an AI chatbot for health information or advice at least once a month.[5] They’re not doing it for fun. They’re doing it because the system that’s supposed to help them is using the same technology against them.

Part II

The absurdity of this situation is hard to overstate, so let me try anyway.

The insurance industry spent years and billions of dollars developing AI tools to deny your claims faster, cheaper, and at greater scale than any human workforce could. They automated rejection. CVS projected $77.3 million in savings over three years from one AI denial program, far exceeding its original $10-15 million estimate.[6] They modeled the denial of your treatment as a line item in a revenue projection.

And now, because those tools worked so well and pissed off so many people, a counter-industry has sprung up selling AI tools to fight the AI tools. The same underlying technology is now deployed on both sides of a denial letter. The insurance company’s AI reads your claim and generates a rejection. Your AI reads the rejection and generates an appeal. The insurance company’s AI reads your appeal and generates a response. Somewhere in this loop, a human being is supposed to be receiving medical care.

“We’re in an AI arms race where as consumers become more savvy and are more empowered by these tools to fight back, the insurers will just, you know, up the ante on their side with the AI.”Jennifer Oliva, Indiana University law professor, PBS NewsHour[7]

She’s right. And she knows she’s right. And knowing doesn’t fix it.

Part III

Before you run off and upload your denial letter to the nearest chatbot (and I’m not saying don’t, because the data suggests you should fight), there are limits to what these tools can do.

Carmel Shachar at Harvard Law School has flagged the hallucination problem. AI generates text that sounds authoritative and confident, and most of the time the medical information in an appeal letter is accurate. But sometimes it isn’t. The AI might cite a study that doesn’t exist, or misstate a clinical guideline, or describe a drug interaction that’s plausible but wrong. If you’re a software engineer or a lawyer, you might catch it. If you’re a 67-year-old recovering from hip surgery and trying to keep your rehab coverage, you probably won’t.

“It can be difficult for a layperson to understand when AI is doing good work and when it is hallucinating or giving something that isn’t quite accurate.”Carmel Shachar, Harvard Law School, Stateline[8]

An appeal letter with bad medical facts doesn’t just fail. It can actively hurt your case. The insurer’s reviewer, human or algorithmic, sees the error and uses it to dismiss the whole thing.

The other limit is structural. These tools help you fight individual denials. They do not change the denial rate. They do not change the algorithm. They do not change the business model that treats your claim as a cost to be minimized. If every denied patient in America used AI to generate appeals tomorrow, the insurance companies would adjust. They would add new criteria, build new filters, and design responses to counter what patients are filing. The arms race would escalate, and the patient would still be the one standing in the middle.

This is not an argument against using the tools. Use them. Seriously. The appeal rate in this country is a rounding error. When UnitedHealth’s nH Predict denials were appealed, plaintiffs allege that more than 90% were overturned.[1] Ninety percent. They were wrong almost every time anyone checked. The win rate is embarrassingly high for the insurers because the denials were wrong in the first place, and they were profitable precisely because almost nobody fights. If an AI can cut the time it takes to write an appeal from eight hours to forty-five minutes, that changes who can afford to fight. A single parent with two jobs and a denied MRI just got a weapon she didn’t have last year. That matters. That’s real.

But the tools exist because the system is broken. Every successful AI-generated appeal is proof that the original denial should never have been issued. Using the counter-weapon is not the same thing as finding the cure.

Part IV

Insurance companies have started sending denial letters that explicitly state the claim was “reviewed by an AI program.” They’re telling you to your face. The rejection of your cancer screening, your child’s therapy, your spouse’s medication: processed by software. Not reviewed. Processed. The human name at the bottom of the letter is increasingly decorative.

And the response, from patients and startups and even Mayo Clinic doctors, is: fine, we’ll use software too. The system forced everyone into a language it invented, and now both sides are speaking it, and somewhere underneath all of it the actual medicine is waiting: the body, the pain, the diagnosis, the treatment that might help. Waiting for the robots to finish arguing.

The most human thing about this whole situation is Holden Karau getting hit by an SUV and realizing her computer science degree was about to become a survival skill. She didn’t build Fight Health Insurance because she saw a market opportunity. She built it because the paperwork was going to bury her, and she had the technical knowledge to dig herself out. Most people don’t have that.

That’s the gap the tools are trying to close. Not the gap between sick people and medicine. The gap between sick people and paperwork. The fact that we need AI to fill out forms to convince AI to let us see doctors is a documentation of failure. Every tool in this article exists because people had to build counter-systems just to access what they were already paying for.

Use the tools. Fight the denial. Win if you can. Don’t mistake the counter-weapon for the cure.

Tools Referenced

FightHealthInsurance.com. Free. Upload your denial, get draft appeal letters.

CounterforceHealth.org. Free. Nonprofit. Analyzes denial, pulls policy language, drafts appeal.

Sheer Health. App. Connect your insurance account. Ask plain questions about your coverage.

Claimable. ~$40 flat fee. Full appeal letter generated from your information.

General AI tools (Claude, Gemini, ChatGPT) can also draft appeal letters. Bring your denial letter, your policy documents, and your medical records. Review everything before you submit. The AI is a tool, not a doctor. Tip the publication at stories@theranter.com if you have a denial story worth documenting.

Sources

ProPublica and subsequent reporting describe how Cigna’s PXDX system was used to deny about 300,000 claims in two months in 2022, with one medical director denying roughly 60,000 claims in a single month and reviewers spending an average of 1.2 seconds per claim, often without opening individual patient files, based on automated comparisons of diagnosis and procedure codes. Lawsuits against UnitedHealth similarly allege that its nH Predict tool guided denials where, according to court filings, more than 90% of contested decisions were overturned on appeal.

Profiles from KFF Health News and Managed Healthcare Executive recount how Bay Area software engineer Holden Karau, after years of battling health insurance denials including following a serious vehicle accident, launched Fight Health Insurance in 2023, a tool that lets patients upload denial letters and receive AI-generated draft appeals, offered free for individual users and supported by paid enterprise services.

Counterforce Health, a nonprofit based in North Carolina’s Research Triangle, offers a free AI assistant that analyzes insurance denial letters, pulls policy language, and drafts appeals for patients, while charging hospitals and health systems for professional versions. Counterforce’s tools and similar AI-based appeal strategies have been highlighted by some Mayo Clinic clinicians in educational presentations on how patients can fight denials, alongside general-purpose AI chatbots.

Sheer Health provides an app that connects to users’ insurance accounts to help them ask plain-language questions about their benefits, understand what is covered, and manage claims. Claimable offers an AI-powered service that prepares full appeal letters from patient-provided information, charging a flat fee of roughly $40 per appeal.

The 2024 KFF Health Misinformation Tracking Poll on Artificial Intelligence and Health Information reports that 17% of U.S. adults use AI chatbots at least once a month for health information and advice, rising to 25% (one in four) among adults ages 18-29.

A 2024 U.S. Senate investigation into Medicare Advantage prior-authorization practices found that CVS Health initially projected $10-15 million in savings over three years from an algorithm-driven utilization-management program but later estimated $77.3 million in savings over the same period, far exceeding its original expectations.

In a PBS NewsHour segment on patients using AI to contest health insurance denials, Indiana University law professor Jennifer Oliva warned that “we’re in an AI arms race,” arguing that as consumers become more empowered by AI-based tools to fight back, insurers will respond by escalating their own use of AI.

Stateline’s reporting on AI-generated health insurance appeals quotes Harvard Law School professor Carmel Shachar cautioning that “it can be difficult for a layperson to understand when AI is doing good work and when it is hallucinating or giving something that isn’t quite accurate,” emphasizing that fabricated or inaccurate medical citations in AI-generated letters can undermine patients’ appeals.

TheRanter.com | stories@theranter.com | @RanterMarkus

That's the intended effect. Welcome to knowing.

Damn this is a good, albeit infuriating and scary, read.